This post is part of the series 'Web Performance'. Be sure to check out the rest of the blog posts of the series!

- 💰 Better user experience means better conversion rate

Users don't like to wait. If your page is too long to load, they may choose to leave, and go to another website. It's even more true on mobile where the connection is often slower than on computers. Some studies show that 46% of users will not return to a poorly optimized site (source), and 53% of mobile site visits leave a page that takes longer than three seconds to load (source)

A 2-second delay in load time during a transaction results in abandonment rates of up to 87%.

Case Study: How a 2-Second Improvement in Page Load Time More Than Doubled Conversions

- 💰 SEO (Search Engine Optimization)

Google has indicated page speed is one of the criteria used by its algorithm to rank pages. I think performance is not something that will make your web site go to the top of the results, but it may prevent it from going down to the second page or worse.

In addition, a slow page speed means that search engines can crawl fewer pages using their allocated crawl budget, and this could negatively affect your indexation.

Optimizing your website may help to reduce the usage of your servers, so the same server can serve more users. Thus, the hosting cost may be reduced. It's even truer now that more and more services are hosted on the cloud where the rule is to pay for the resources you use. The less resource you use the less you pay.

If your website requires less CPU, less GPU, or less network, it consumes less energy and produces less heat. It is a way to help in fighting global warming! Note that reducing the ecological footprint of your website is possible on the server-side and the client-side.

#What happens when a user navigates to your website?

When you access a web page such as https://www.meziantou.net, there are lots of steps to execute to render the page in your web browser. Understanding these steps will help you find places for improvements. I won't list all steps because it would require more than one blog post to list all of them. Here's the most important steps where we'll see potential performance improvements:

- Query the DNS server to resolve the IP address from the domain name

- Initiate a TCP connection to the server

- Make an http GET request to the server

- The server processes the request and sends the response

- The browser checks if the response is a redirect or a conditional response (3xx result status codes), authorization request (401), error (4xx and 5xx), etc.; these are handled differently from normal responses (2xx)

- The browser decode the response if encoded (gzip, brotli, etc.)

- The browser determines what to do with the response (e.g. is it a HTML page, an image, binary file, etc.?)

- The browser renders the response, or offers a download dialog for unrecognized types. If the response is an HTML file, rendering the page requires additional steps:

- Downloading resources such as images, CSS stylesheets, JavaScript files (DNS queries may be required for external domains)

- Parsing JavaScript and CSS files

- Executing JavaScript when needed

- Computing the layout of the page

- Rendering the page

This is just a quick overview. If you want to go deeper, you can read these posts:

#Measure before doing changes

If you can not measure it, you can not improve it.

Peter Drucker

Before doing optimizations, it's important to be able to measure the improvements in your changes.

- Define the metrics you are interested in

- Decide what the kind of statistics you need: average, min, max, percentiles, etc.

- What are the goals for each metric?

- Measure the metric

There are common metrics that you can measure:

- First Contentful Paint measures the time from navigation to the time when the browser renders the first bit of content from the DOM

- First Meaningful Paint is the paint after which the biggest above-the-fold layout change has happened

- The Time to Interactive measures how long it takes a page to become interactive

- The First CPU Idle measures when a page is minimally interactive

You should measure these metrics under the expected load on your website. If you expect 1000 concurrent users at any time, it's useless to measure the performance when there is only a single user.

Here're some tools you can use to measure some common metrics:

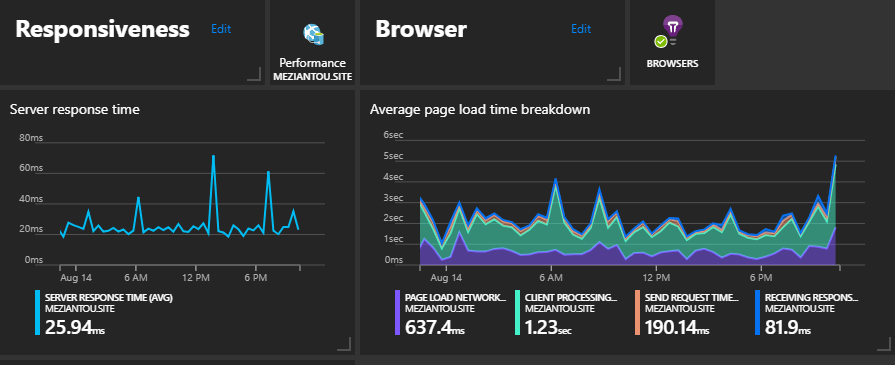

##Microsoft Application Insights

Application Insights shows page load times from your visitors. It allows you to measure the server-side and client-side performance. It also measures additional data such as CPU and memory usage or the number of visitors and requests. You can track custom measures. This could be useful to track the usage of some features or the time to execute a task.

There are some default graphs and queries, and you can create your own queries to measure your metrics. Also, you can create a custom dashboard with your KPI.

Application Insights

Application Insights

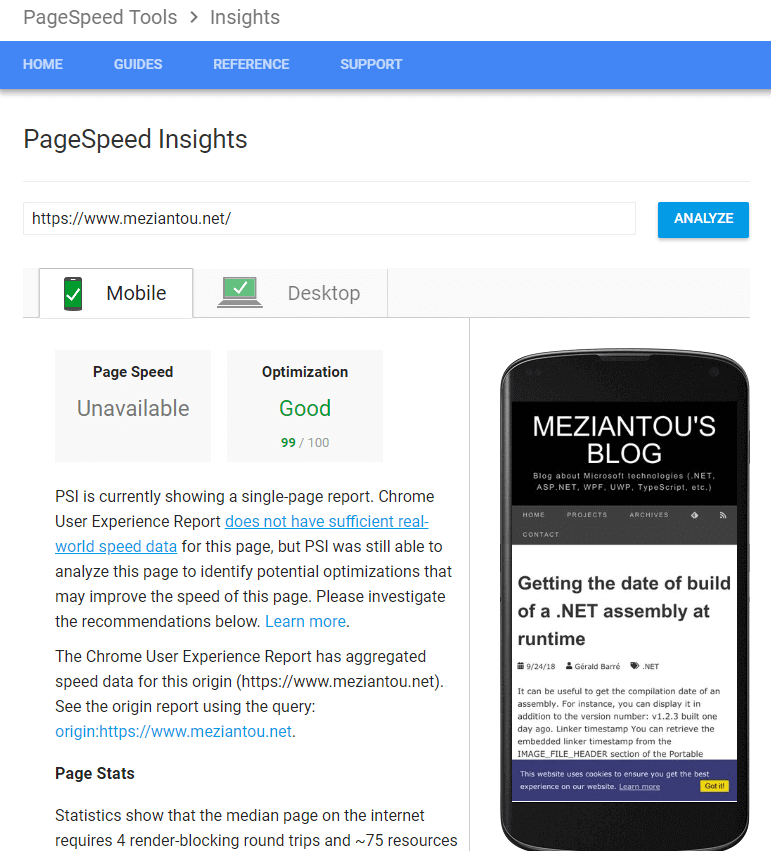

##Google PageSpeed Insights

PageSpeed Insights reports on the real-world performance of a page for mobile and desktop devices and provides suggestions on how that page may be improved.

PageSpeed Insights check about ten rules and provides documentation to fix all of them.

Google PageSpeed report

Google PageSpeed report

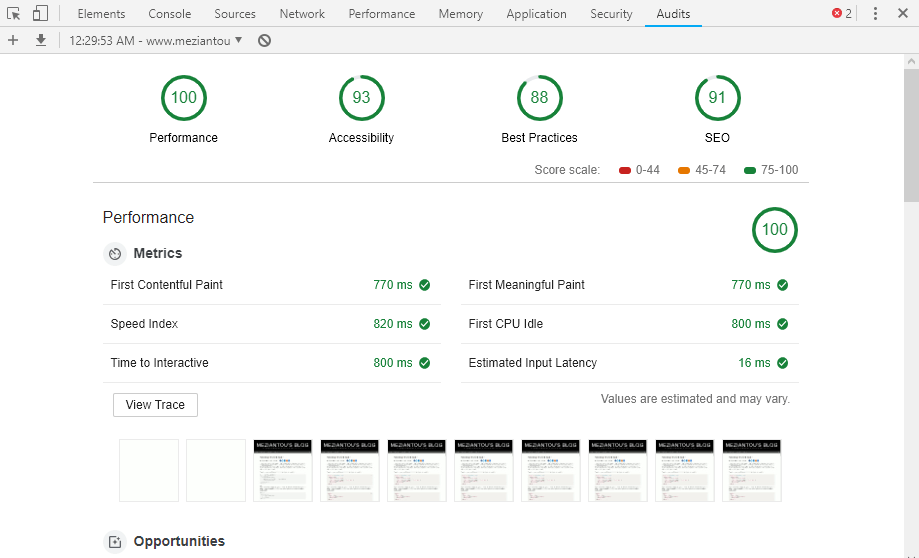

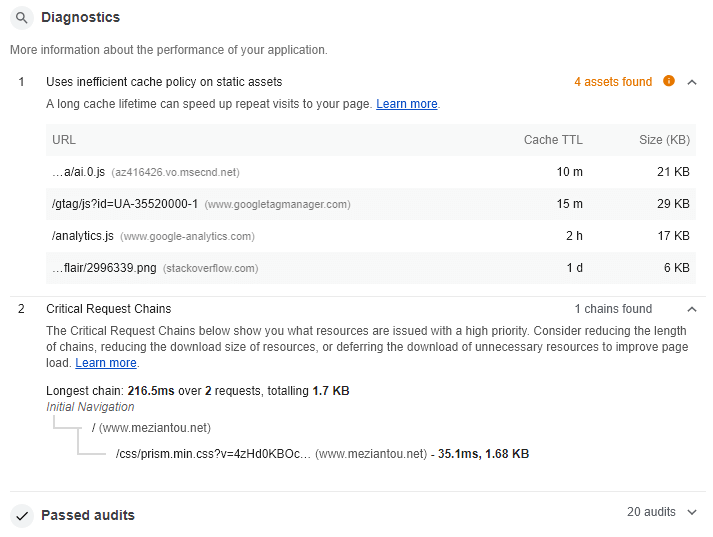

##Lighthouse

Lighthouse is an open-source, automated tool for improving the quality of web pages. You can run it against any web page, public, or requiring authentication. It has audits for performance, accessibility, progressive web apps, and more. Lighthouse is integrated into the Google Chrome Developer tools.

This is a very good tool to get a quick audit of your website. This gives you issues and how to fix them to improve the performance of your website. One of the things I like is the screenshot of the page at regular intervals. This allows you to check how your page evolved during the loading.

Lighthouse

Lighthouse

Lighthouse - Diagnostics

Lighthouse - Diagnostics

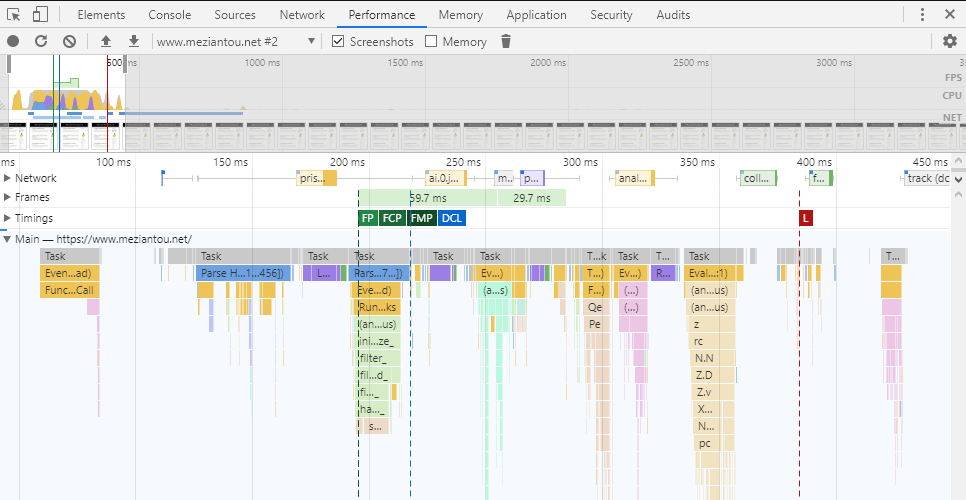

The developer tools of Google Chrome, Firefox, or Edge contains lots of tools. In terms of performance, the network tab, Performance tab, and Memory tab are the most useful.

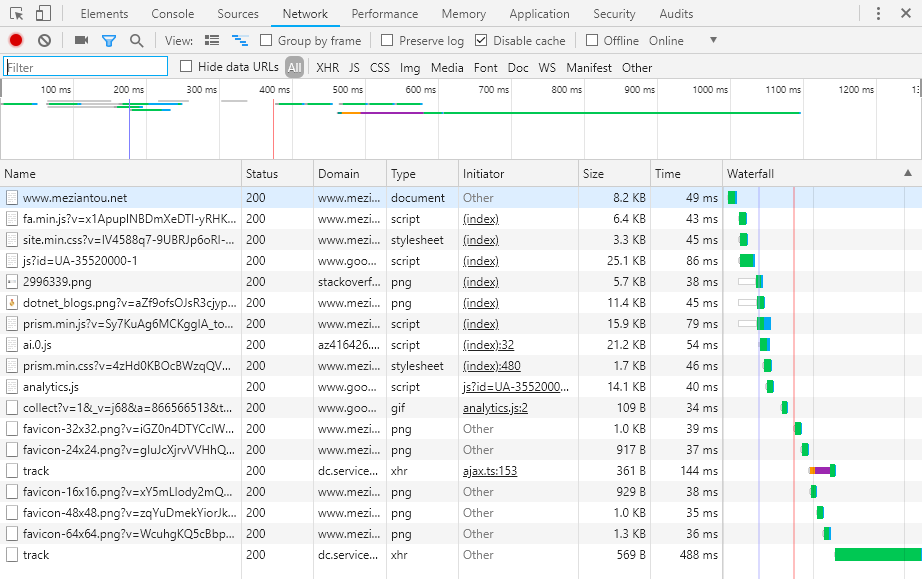

The network tool allows you to view all the requests and their content. This is useful to check if all requests are useful. Thanks to the waterfall chart you can check if all resources are downloading in parallel or sequentially. You can also check which protocol is used and the size of the payload.

The performance tool allows you to check how your website behaves at runtime. Does your JavaScript block your website? Do you force the browser to compute the layout more than needed?

The memory tool allows you to detect memory leaks. This is not very useful for static pages. But if you are developing a Single Page Application (SPA), this may be important to ensure your application is not leaking memory because it may become unstable after a few minutes/hours of usage. You can easily find detached DOM trees. Also, you can check if there are too many garbage collections. This may need some rework of your JS to reduce the memory pressure of your application.

Last but not least, you can simulate a slow network to see how your website behaves on degraded conditions. For instance, you can simulate a slow 3G connection, so all network calls have a latency. You can also simulate a slower CPU to ensure your JS is not slow on slower devices. Indeed, you often test your website on a computer. But most of your users must visit it using their cell phones which are often slower than your computer.

The audit panel allows you to see on one screen:

- The network calls

- Some metrics such as the First Paint (FP), the First Contentful Paint (FCP), the First Meaningful Paint (FMP), the DOMContentLoaded event, the Loaded event

- The blocking JavaScript

Chromium Developer Tools - Audit

Chromium Developer Tools - Audit

The network tab allows you to see all the network calls, the size of the resources, the loading time, and when the download starts:

Chromium Developer Tools - Network panel

Chromium Developer Tools - Network panel

##Browser APIs

Browsers capture some metrics, and expose them through some APIs. You can also use these API with JavaScript for diagnosing some performance issues. Here's some available APIs:

You can use the Navigation Timing API to gather performance data on the client-side. Also, the API lets you measure data that was previously difficult to obtain, such as the amount of time needed to unload the previous page, how long domain lookups take, the total time spent executing the window's load handler, and so forth.

The PerformanceResourceTiming interface enables retrieval and analysis of detailed network timing data regarding the loading of an application's resources. An application can use the timing metrics to determine, for example, the length of time it takes to fetch a specific resource, such as an XMLHttpRequest, image, or script.

The PerformancePaintTiming interface provides timing information about "paint" (also called "render") operations during web page construction. "Paint" refers to the conversion of the render tree to on-screen pixels.

You can use the PerformanceLongTaskTiming interface to identify tasks that block the UI or other tasks as well. To the user this is commonly visible as a "locked up" page where the browser is unable to respond to user input; this is a major source of bad user experience on the web today.

The ElementTiming API will allow developers to know when certain important image elements are first displayed on the screen. It will also enable analytics providers to measure the display time of images that take up a large fraction of the viewport when they first show up.

A web performance API that allows the developer to monitor the degree to which DOM elements have changed their on-screen position ("layout jank") during the user's session.

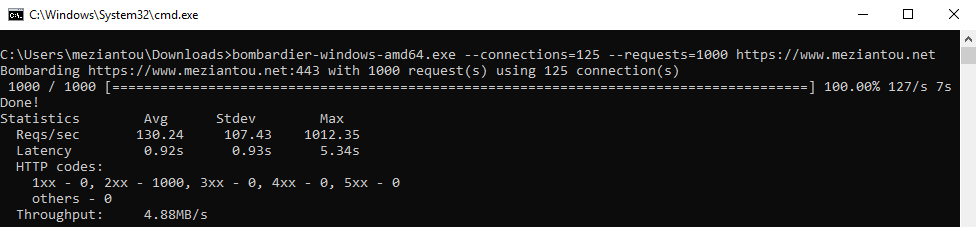

##Load testing using Bombardier

Bombardier is a free tool to measure the performance of your server by doing a load test. It works on Windows and Linux.

Shell

bombardier-windows-amd64.exe --connections=125 --requests=1000 https://www.meziantou.net

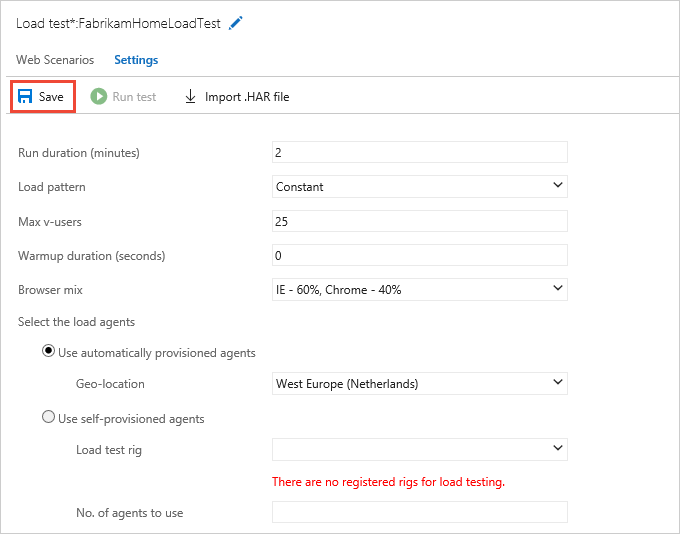

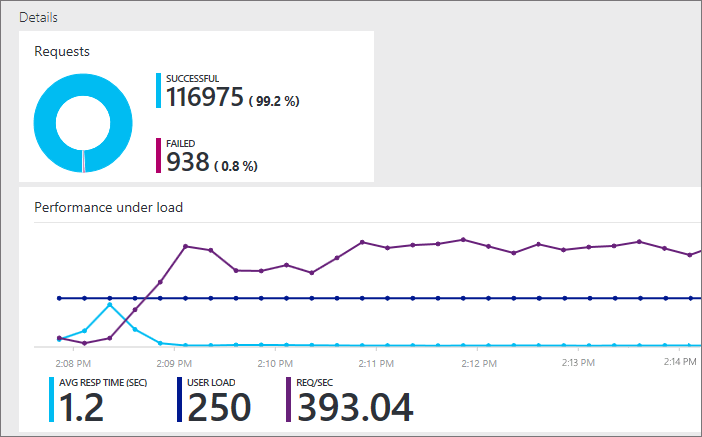

##Azure load testing

Microsoft Azure provides a load testing tool. This could be a better option than Bombardier because it has much more options to configure the requests. You can set an almost unlimited number of users, from multiple locations across the world, with different browsers. You can also compare multiple runs.

Do you have a question or a suggestion about this post? Contact me!